Since my old video streaming tutorial get a lot of view I wanted to add some new info and an introduction. If you are building a robot and need to stream video this is for you! Why android to desktop -Android The android device I use is an old old v21 phone. I can […]

Category Archives: android

Android data analysis and error handling

The featured picture for this post is actually a cnc project where my end mill broke moments before completing. But if you’re an android developer you probably have had the little gear icon in your email inbox or more recently emails from fabric. Scroll down to the bold title to skip my rambling musings. These […]

Stream Video From Android Part 8 – Tips, Tricks and Tests

Want a little bit more? You got it. A bit of backstory on my experience. I wrote these blog posts because it seemed there are reference manuals written by experts for experts with no bridge for the beginner to cross relating to these subjects. This article plus the resources I mentioned in the first post […]

Stream Video From Android Part 7 – Depacketize and Display

Getting those packets onto a screen. There will be one more post after this talking about some extra classes and techniques I used to get this done. So if I gloss over something here make sure and check there to get your codes straight. -> Get the videodecoder code here <- -> Get the imagedecoder […]

Stream Video From Android Part 6 – Packetize RTP

I know, you deserve a nap but please hang in there. The file I’m referencing is in the last post if you need it. Also MAJOR WARNING HERE!!! I did not test my rtp packet code against another software. There may be errors because I simply wrote what seemed to make sense and then wrote […]

Stream Video From Android Part 4 – Parse Boxes and SPS or PPS

Alright, your have the taste of blood in your mouth and you like it. Lets dive deeper and actually try parsing some data. ->source code here <- In our android app we have saved the file and now we are passing it to our sdp maker. Ironically I do not actually use session description protocol. […]

Stream Video From Android Part 3 – Understanding h264 in mp4

The last section was tough, it only get tougher. As I said, the mp4 file is streamed and then data to decode the file is written after. So like most file types mp4 is constructed in parts. Frequently you hear the term file header which is a section that explain the files contents. With mp4 […]

Stream Video From Android Part 1 – Why & How

2020 update Where I go over better methods on android and give some quick ffmpeg tips I’m not sure how you got here, but if you need to stream or dissect video this might be your lucky day. Whenever I write a new article my biggest joy is choosing an image to represent […]

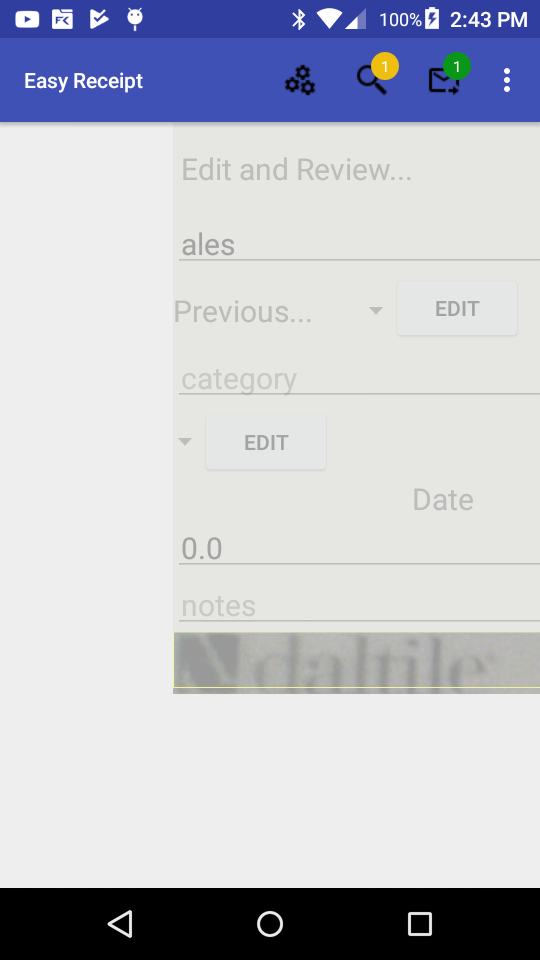

Android Swipe Functionality

I needed to allow my easy receipt app to do a few simple things. -A way for the user to review the receipts that was swipe-able. -This view needed to show data and an image -It needed left right detection as well The basic components for this are as follows; Layouts- I used two […]

Android Camera2 Api

If you are building an app with the android camera2 api here are my thoughts after fighting with it. (Full code at bottom for copy paste junkies) This api was a little verbose with me using about 1200 lines of code. It could probably be done easier but if you want something custom here is […]